|

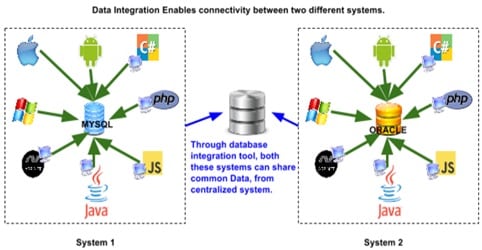

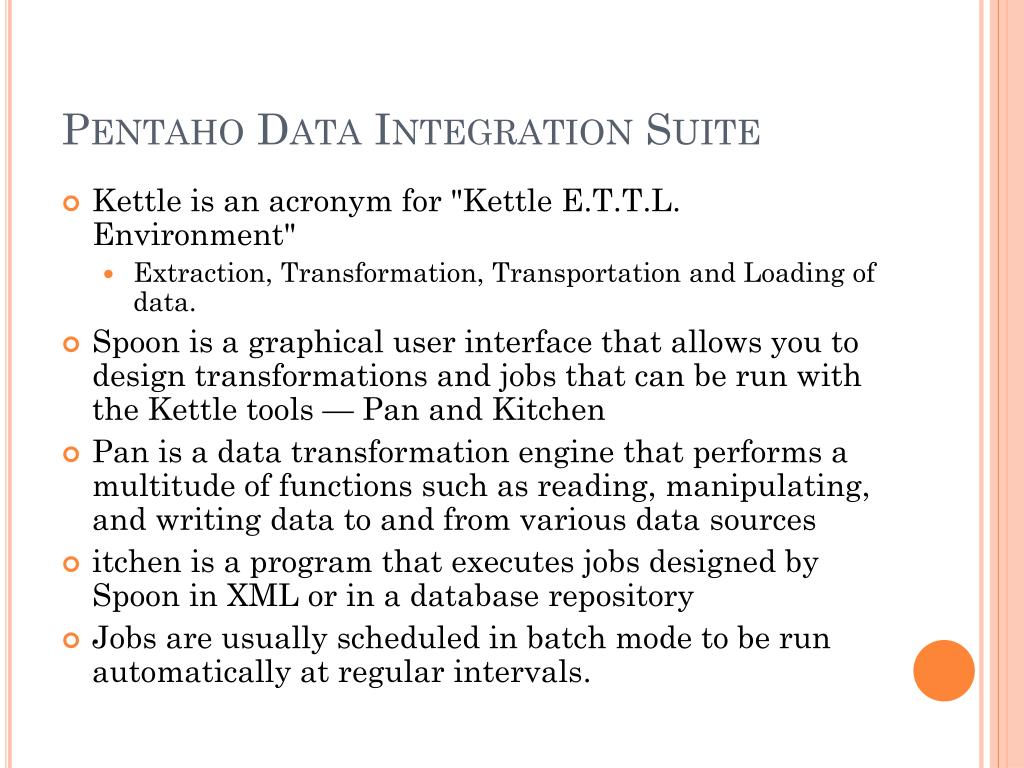

Pentaho Data Integration (PDI).Step 1: Retrieve data from a flat file and load it into a relational database. Microsoft SQL Server Integration Services (SSIS). The tutorial consists of six basic steps, demonstrating how to build a data integration transformation and a job using the features and tools provided by Pentaho Data Integration (PDI).First of all, getting the data out of SQL-Server and then putting it back in will always be slower than just doing the complete transformation on the database.ETL tools are used to move data between systems. The following tutorial is intended for users who are new to the Pentaho suite or who are evaluating Pentaho as a data integration and business analysis solution.

Pentaho Data Integration Vs Ssis Update Your PackageThen I tried to read from the table instead, I got read performance of 20000/sec, but the write performance to table was around 3000.Read performance from file is about 50000 rows/sec and the write performance to table matches that. I run the read from file only and it performed 3000 reads/sec, so there was the performance hog. But I only get the performance of 3-5 thousand writes/reads per second. I read an input file with receipts and store them into a table, that looks like: (16) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, (1) COLLATE SQL_Latin1_General_CP1_CI_AS NULLI have tested Spoon and SSIS on the same machine and on the same databases, files etc.To get better performance (after testing default settings) I have tried to buffer more (rows in rowset is set to 100000 and commit size to 10000000 in output to table). Have a lot of fun with Kettle.Attachment: SQLServer2K.ktr (Posted by AC, document type set to plain)I'm evaluating Spoon (like it a lot) and compares the performance to MS SSIS (Microsoft SQL Server Integration Services, for 2005). We are building every 2 days and a kettle.jar to update your package is available 5 minutes after something get committed to the code.FInally, if you're really interested in persuing this, I would love to work with you to eliminate possible bottlenecks in Kettle for the better good of everyone.I hope this answers your questions.Jens for example did a quick test and het got +15K rows/s on a totally different system.The reason I'm calling this discussion unconstructive is that you sent me nothing. I'm pretty sure that that is the speed that the hard disk can handle.That is because Kettle is not even close to consuming all the CPU it has available.I sent you the transformation with which anyone can test for themselves how much they are getting on their system. There are a lot of factors that are playing a role:All I can say is that on my memory-constraint-no-caching-lowly-512M-kind-of-PC on a SQL Server 2K running in 9M of RAM I'm getting 5K rows/s. I like a good challenge, but benchmarking between 2 different versions of Kettle is already a difficult issue, forget about doing the same between 2 totally different ETL tools. But if performance can't be fixed it is not usable for us.Don't you see that you have _NOT_ offended me. I think Spoon has a nice and pragmatic view on how to deal with ETL.

Pentaho Data Integration Vs Ssis How To Build A

0 Comments

Leave a Reply. |

AuthorCharles ArchivesCategories |

RSS Feed

RSS Feed